As you sit luxuriating in the glow of your vivid, pixel-dense widescreen computer monitor, spare some kind thoughts for the pioneers of computing, who didn’t even have monitors at first.

Instead of curved screens, HDR color and no end of display options, the pioneers had blinking lights, according to Computer History. That is, if they were lucky — some only had envelope-sized manila cards with patterned rectangles mechanically punched in them.

Even when engineers and industrial designers figured out how to visualize the information from computers on screens, it was years — decades, really — before operators got even a sniff of the visual feast we all take for granted with today’s workstations and gaming monitors.

The Early Years

The earliest computers were row after room-filling row of electronics-filled cabinets — usually with little or no visual indication of the information they were processing and spitting out. For that, you needed a paper print-out.

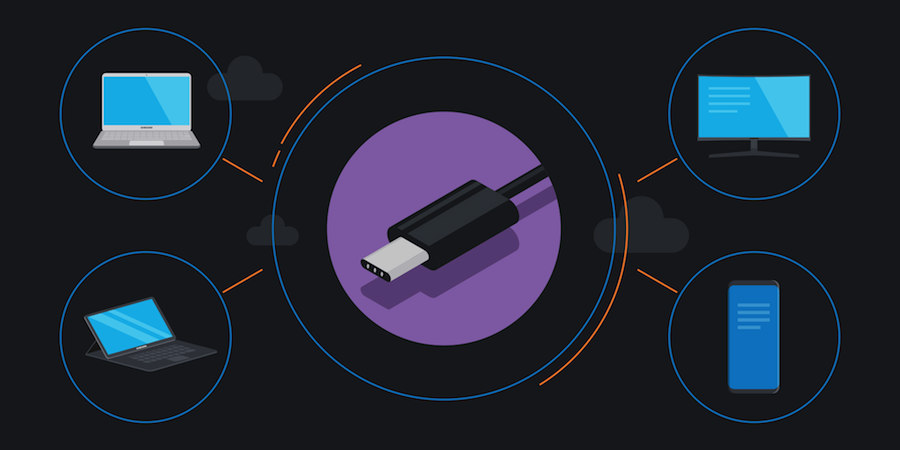

The Complete Guide to USB Type-C

Learn how to clear desk space, streamline workflow and save time and money with USB-C connectors. Download Now

It took until the early 1970s for cathode-ray tube (CRT) technology, already widely used as the core of television sets, as noted by History-Computer, to be adapted for use as computer displays. Though color televisions started to grow increasingly common in homes by that period, the first CRT monitors for mainframe computing systems were monochrome, and in most cases, all that was up on screens was text.

From TV to Computer

TV sets were co-opted by the late 1970s and early 1980s to be used as computer monitors. They developed hardware and code to get early PCs providing an output that could be converted and shown on portable consumer televisions, according to CNET. The resolution was low and the colors were limited, but at the time, this was a revelation — or even a revolution.

It took a few years still, till the mass market dawn of personal computers in the mid-to-late 1980s, for dedicated monitors to be developed and marketed to work with the boxy computer workstations. At that point, they were proprietary — monitors that only worked for specific computers, at specific settings. There was no mixing and matching.

That changed with the introduction of multisync technology, which opened up the field for desktop monitors that were not directly tied to specific brands and models, according to Techopedia. Multisync enabled a monitor to support multiple resolutions, refresh rates and scan frequencies. Finally, if a computer was replaced, the existing monitor could work with that new PC.

But these were still big, heavy, CRT-based screens. Even a 19-in. CRT monitor was back-strainingly heavy, and consumed much or most of the open space on a workstation desk.

LCD Takes Center Stage

It wasn’t until the late 1990s and early 2000s that liquid-crystal display (LCD) technology matured to a level where could it graduate from its main use as small, monochrome screens for pocket calculators to large, color desktop displays. The advent of LCD dramatically reduced the overall dimensions and weight of desktop displays, opened up desktop space and lowered energy consumption. LED lighting gradually replaced fluorescent backlights, further reducing weight and energy usage.

Today, LCD utterly dominates the monitor business. In recent years, screens have steadily grown bigger, brighter and lighter, and new form factors have been introduced — notably widescreen and super widescreen models that enable easy multitasking. Seen at first as a novelty, curved LCD monitors are finding homes for gamers who want immersive visual environments and office workers who like the ergonomic design, with the curved surface reducing eye strain by equalizing the focal distance on widescreens.

But monitors are still advancing in more ways than shape. Today’s premium monitors support technologies like high dynamic range (HDR) and quantum dots, a film added to the layers of an LCD to hugely enhance the range of available colors.

Multifunctional Technology

New technologies like USB Type-C — an electronics connection standard — are ending monitors’ days as “dumb displays.” With full USB-C support, the monitor is the streamlined workstation hub, cleaning up desktops by requiring fewer cables, while delivering 4K or even higher visuals to users.

Computing pioneers were visionaries, but it’s doubtful many of them could imagine a time when an office worker would pull a super-thin, ultrapowerful notebook from her designer shoulder bag, connect a single cable, and get to work on a 49-in. curved monitor that has room for every work tool she needs on the screen in front of her.

Discover how USB Type-C monitor connections can clear up your workstation in this free white paper. Still not sure a curved screen is right for you? Read up on the ergonomic benefits for employees.